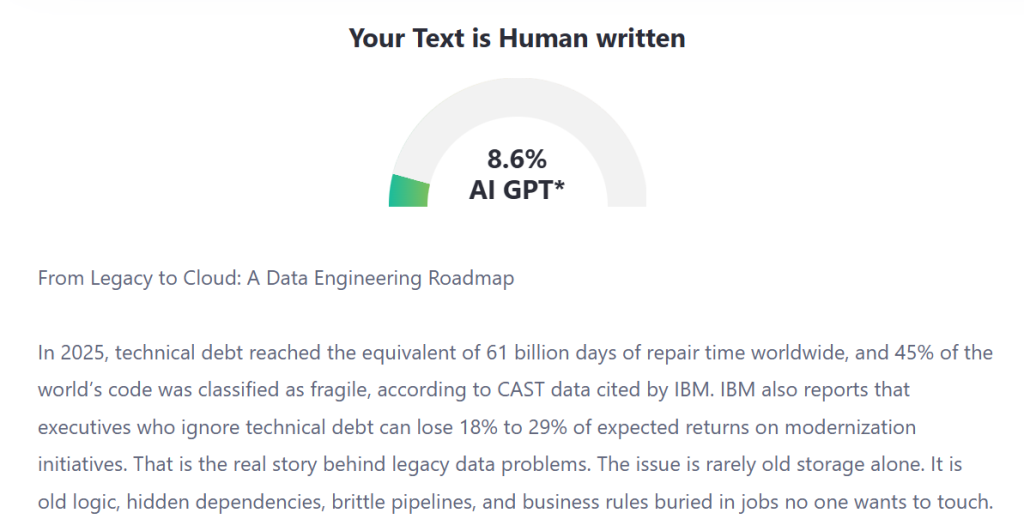

In 2025, technical debt reached the equivalent of 61 billion days of repair time worldwide, and 45% of the world’s code was classified as fragile, according to CAST data cited by IBM. IBM also reports that executives who ignore technical debt can lose 18% to 29% of expected returns on modernization initiatives. That is the real story behind legacy data problems. The issue is rarely old storage alone. It is old logic, hidden dependencies, brittle pipelines, and business rules buried in jobs no one wants to touch.

That is why most migration programs start in the wrong place. They begin with tools, vendors, and target architecture diagrams. They should begin with a harder question: which data still matters, which processing still earns its keep, and which historical habits are draining money every month? Good data modernization services do not treat cloud as a destination. They treat it as a chance to clean up years of accumulated friction.

This article lays out a practical roadmap for teams moving from legacy estates to cloud-first data platforms. Not a brochure version. The kind of roadmap that accounts for stale reports, overloaded batch windows, undocumented joins, audit pressure, and political resistance between application teams and analytics teams.

Why do legacy data stacks become expensive long before they break?

Legacy data environments usually look stable from the outside. Reports run. Files land. Dashboards refresh. But underneath, the operating model is often held together by custom scripts, duplicated tables, inconsistent definitions, and manual interventions.

A legacy stack becomes risky when three things happen at the same time:

- Business teams ask for faster answers

- Source systems change more often

- The data team cannot safely change pipeline logic without side effects

That is when organizations start shopping for data modernization services. Not because cloud is trendy, but because the old estate has become too costly to maintain with confidence.

Here is the common pattern I see in older environments:

| Legacy condition | What it looks like in practice | Why it blocks progress |

| Tight coupling between source and reporting layers | One schema change breaks downstream jobs | Every change request becomes a mini incident |

| Batch-heavy processing | Overnight windows keep growing | The business waits too long for usable data |

| Undefined data ownership | Nobody can approve lineage or usage rules | Governance becomes reactive |

| Tool sprawl | ETL, scheduling, metadata, and quality checks sit in separate silos | Teams spend time reconciling systems instead of improving them |

| Duplicate storage | Multiple copies of the same business entity | Cost goes up and trust goes down |

The cloud does help, but it does not fix bad design by itself. Google’s current Search guidance is useful here in an indirect way: it stresses original, people-first content, clear authorship, and trust as the most important component of E-E-A-T. The same logic applies to data programs. If the operating model lacks ownership, clarity, and trust, the platform choice will not save it.

The migration roadmap steps that actually reduce risk

A credible roadmap for cloud data migration is not one large move. It is a sequence of decisions that reduce uncertainty at each stage. The cleanest programs tend to follow six steps.

1. Audit the data estate before you design the target state

Do not start with the target platform. Start with an evidence-based inventory.

Map:

- Business-critical datasets

- Pipeline frequency and latency

- Source system dependencies

- Known data quality defects

- Regulatory and retention requirements

- Consumers of each dataset

This is the point where many teams discover that 15% to 30% of their scheduled jobs serve no active business purpose. Old reports linger because no one wants to be the person who turns them off. A serious data modernization services engagement should include structured retirement decisions, not just migration plans.

2. Segment workloads by business value and technical difficulty

Not every workload deserves the same treatment. Some should be rehosted briefly. Some should be rebuilt. Some should be retired. AWS and Microsoft both emphasize planning modernization in phases and choosing a strategy based on workload characteristics, not ideology.

A simple triage model works well:

- Move first: stable workloads with clear ownership

- Redesign next: high-value workloads with brittle logic

- Retire soon: low-usage assets with unclear purpose

- Delay intentionally: politically sensitive or high-risk domains until controls are ready

This keeps cloud data migration from becoming a giant all-or-nothing program.

3. Redesign the ingestion pattern, not just the destination

Too many teams move the same file drops, same overnight jobs, and same fragile sequencing into a cloud service and call it modernization. That is just relocation.

The better question is whether the workload still needs traditional ETL at all. In many cases, ETL modernization means shifting from monolithic, job-based processing to modular pipelines with clearer contracts, more frequent loads, lineage, and testable logic. Microsoft’s architecture guidance now frames ETL and ELT choices around workload needs, latency, orchestration, and reliability patterns such as checkpointing and idempotency.

4. Build the governance model before broad ingestion

Governance should not appear at the end as a compliance checklist. AWS notes that governance is what helps the right people securely find, access, and share the right data when needed. Databricks makes a similar point in its 2026 governance guidance, stressing centralized management, quality standards, and security across data and AI assets.

In practice, that means defining before full rollout:

- Data domains and ownership

- Access rules by role and sensitivity

- Naming and catalog standards

- Lineage expectations

- Quality thresholds

- Retention and archival policy

Without this, a new data lake quickly becomes an old problem with cheaper storage.

5. Prove one domain end to end

Pick a business domain where the pain is obvious and measurable. Finance close. Customer analytics. Inventory visibility. Claims intake. Something with enough complexity to matter but not so much that it stalls the program.

Your pilot should prove five things:

- Source capture works reliably

- Data quality can be measured

- Business definitions are consistent

- Security controls hold up in practice

- Users trust the output enough to replace the old path

This is where data modernization services either earn credibility or lose the room.

6. Industrialize only after the model works

Once one domain is stable, repeat the pattern with templates, automation, and operating standards. Google Cloud’s migration guidance makes the point clearly: tools matter, but execution works best when you standardize proven methods, testing, and automation instead of improvising for every workload.

That is the moment to expand. Not earlier.

Choosing the right tools without buying a problem you do not need

Tool selection gets too much attention, but it still matters. The mistake is choosing tools before choosing the operating model.

A good selection process asks four blunt questions:

- Does the platform support both ingestion and governance well enough for your data profile?

- Can it handle structured and unstructured workloads without forcing needless duplication?

- Does it give teams lineage, discoverability, and policy control in one place?

- Can your engineers support it without building a second job just to run the platform?

For many organizations, the target design now centers on a governed data lake or lakehouse pattern rather than separate, loosely connected components. AWS, Databricks, and Google Cloud all now emphasize governance, cataloging, and controlled access as first-class design concerns, not afterthoughts.

What matters most is fit.

| Decision area | What to look for | Red flag |

| Ingestion | Batch plus near-real-time support | Separate tools for every source type |

| Processing | SQL, Python, orchestration, testing | Heavy custom code for simple jobs |

| Governance | Catalog, access control, lineage | Governance handled outside the platform |

| Cost control | Clear visibility into compute and storage use | Charges are hard to attribute |

| Team fit | Skills your engineers can actually support | Platform depends on a tiny specialist group |

This is where experienced data modernization services teams make a real difference. They know when not to overbuy.

Data governance is where most roadmaps either become durable or drift

Governance is often discussed in abstract language. It should be much more concrete.

The core governance question is this: who is allowed to define, approve, change, and consume a dataset, and how will everyone know what changed?

If you cannot answer that quickly, the platform is not production-ready.

Strong governance in modern data platforms usually rests on five controls:

- Ownership: every critical dataset has a named business and technical owner

- Classification: data is tagged by sensitivity, regulatory exposure, and usage intent

- Quality rules: thresholds are documented and monitored

- Lineage: teams can trace source to consumption

- Access review: permissions are reviewed on a schedule, not only after incidents

This is one reason ETL modernization is often more important than teams first assume. When pipelines become modular and testable, governance becomes much easier to enforce. When logic remains buried in opaque jobs, governance becomes detective work.

What cloud data platforms actually do better

The strongest case for the cloud is not storage cost alone. It is operational clarity.

Modern cloud data platforms help teams:

- Separate storage from compute where appropriate

- Reduce dependency on fixed batch windows

- Improve observability across jobs and datasets

- Support policy-driven access instead of manual approvals

- Give analysts and engineers a shared view of trusted assets

McKinsey notes that legacy-to-cloud programs succeed when leadership treats the move as a business and operating decision, not just an infrastructure exercise. That matches what practitioners see on the ground. The win is not “we moved.” The win is “we can now change safely, govern consistently, and deliver usable data faster.”

That is the business case data modernization services should be built around.

Conclusion

The shortest route from legacy to cloud is usually the most expensive one later.

A better roadmap starts with inventory, ownership, and retirement decisions. Then it moves through phased migration, careful tool selection, governance by design, and domain-by-domain proof. That is how data modernization services create lasting value instead of another expensive platform layer.

If there is one idea worth holding onto, it is this: modern data programs fail when they treat history as baggage to copy. They succeed when they treat history as evidence to review. Which feeds still matter. Which rules still make sense. Which reports still guide decisions. Which controls belong at the center.

That is the difference between a migration project and a real data engineering roadmap.